In recent months, there has been a big push for self service business intelligence tools by Microsoft. The center of this universe is Microsoft Excel, a common application used by the typical data analyst.

The following four products started off as add-ins for Excel. Now, some of them are a integral part of MS Office 2013.

- Power Query

- Power Pivot

- Power View

- Power Map

It is not surprising that these four business intelligence products have an offering in Office 365. I really like office 365 as a platform to share the final analysis with your team, department and/or company. Like any data in the cloud, caution should be taken when it is confidential information that is governed by auditing rules like HIPAA or such.

Today, I will start talking about Power Query which is a self service ETL tool. This tool was released in August of 2013. The online help is a good place to start learning about this tool.

Because these tools are relatively new, I found out that once in a while the add-in will freeze. I suggest using a virtual machine so that rebooting the operating system does not cause you real down time. I am hoping that these tools mature over time and this annoyance will go away.

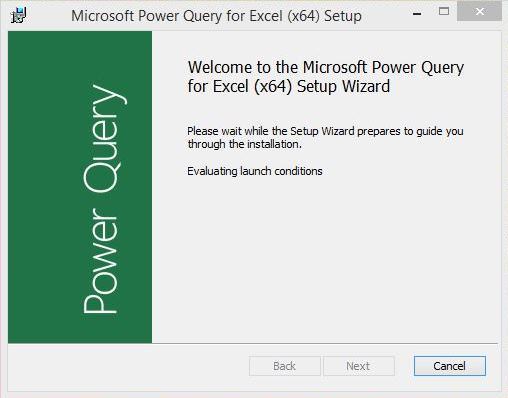

The first step in using Power Query is to download the add-in here from the Microsoft site. Please read the system requirements. This version requires the Dot Net Framework 3.5. You might have to install this first as a prerequisite. Next, run the setup program as shown here.

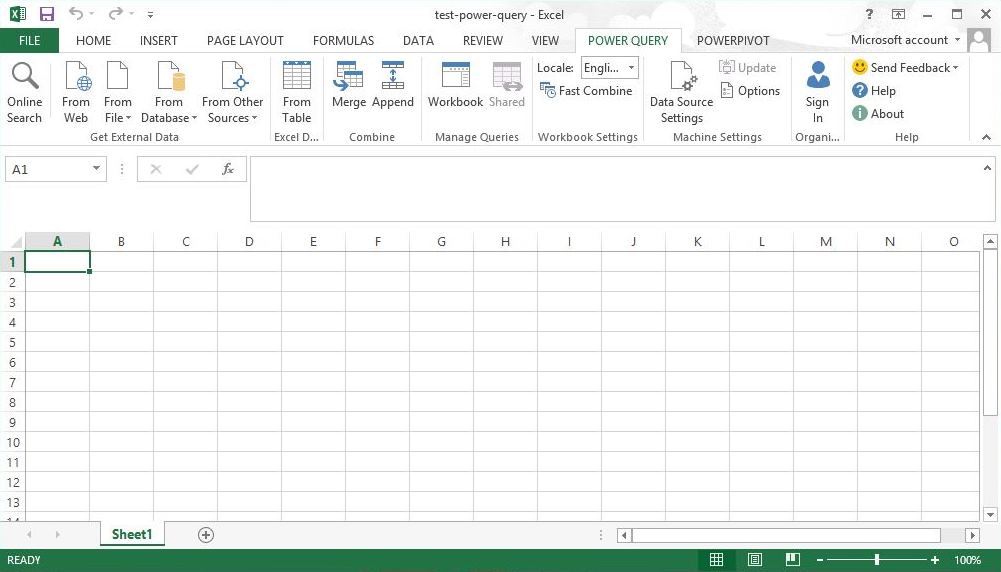

The Power Query tool is built to grab data from various sources and mash it up in MS Excel.

The data can be stored as either a worksheet with a million row limit. Or in the data model that is managed by the xVelocity in-memory analytic engine. This engine uses a column store philosophy that does very well with non-unique data. It can produce some high compression ratios.

Okay, enough about the tool. Let’s get down to business by extracting, translating and loading (ETL) data into Microsoft Excel.

The get external data section of the ribbon is where we will start.

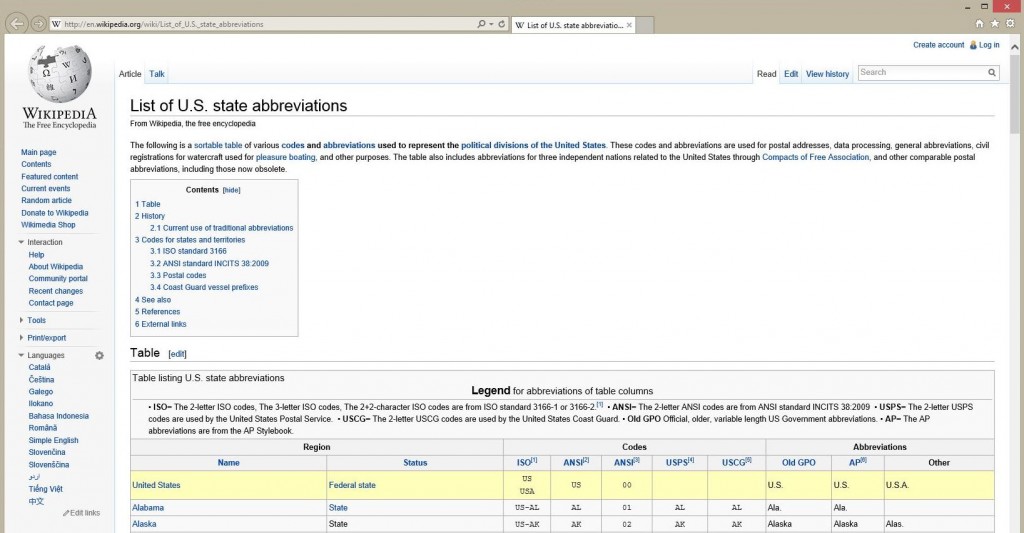

I know there is a data set in Wikipedia that has abbreviations for US states in a United States Postal Service (USPS) format. I want to add this data to my model for analysis.

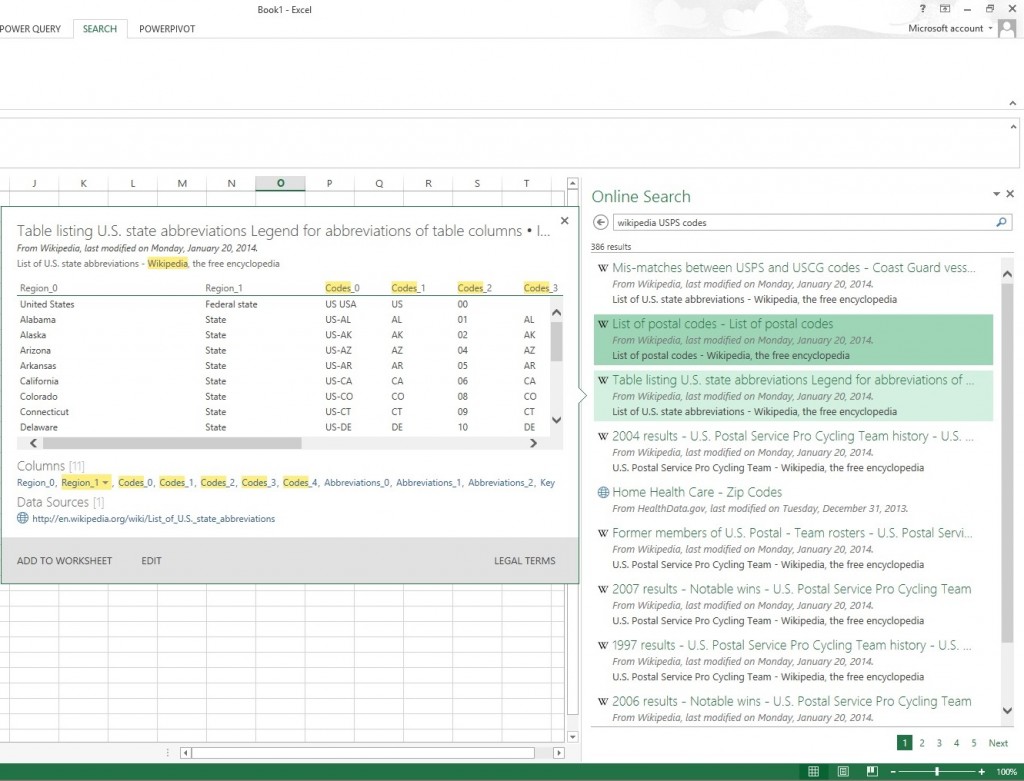

By clicking the on-line search button, I can enter the key words to find this public data set.

Choose the edit option to start translating the data.

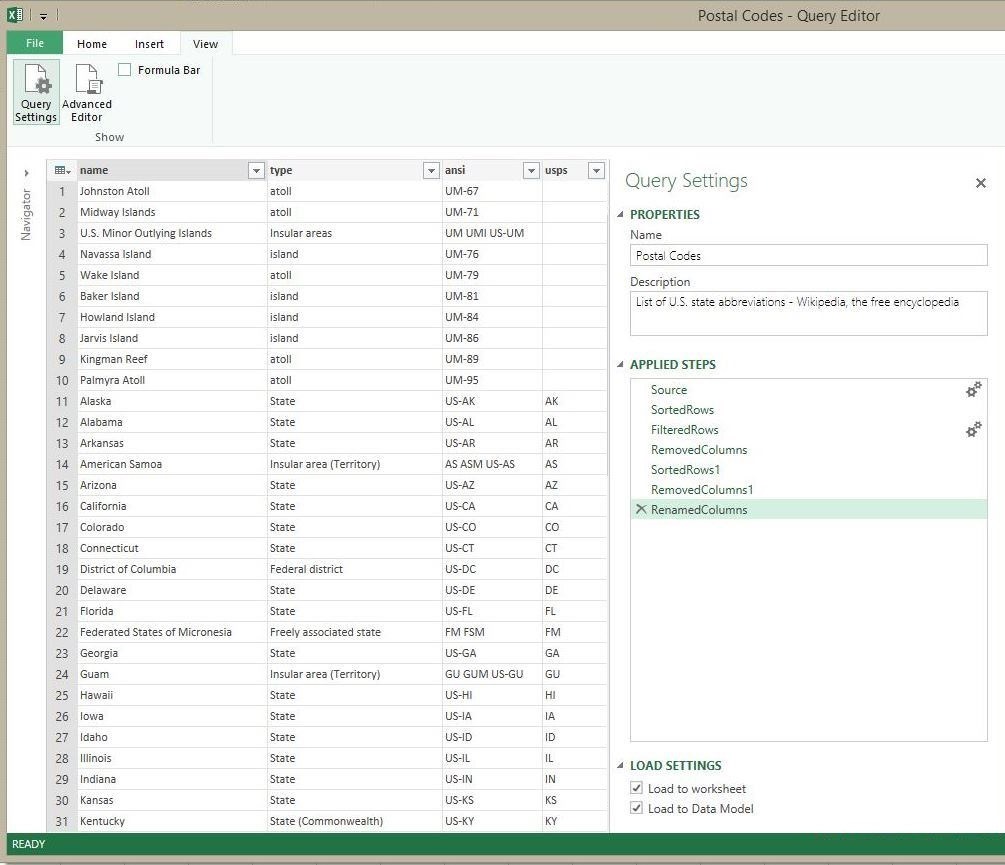

The Query editor allows you to apply translations to the source data. Each step is saved so that it can be replayed during a refresh (re-import). Please note, the load settings determine the ultimate destination for the data.

Here are some of the translations I performed to cleanup the USPS data.

- Get Source Data

- Sort Rows

- Filter Rows

- Remove Columns

- Rename Columns

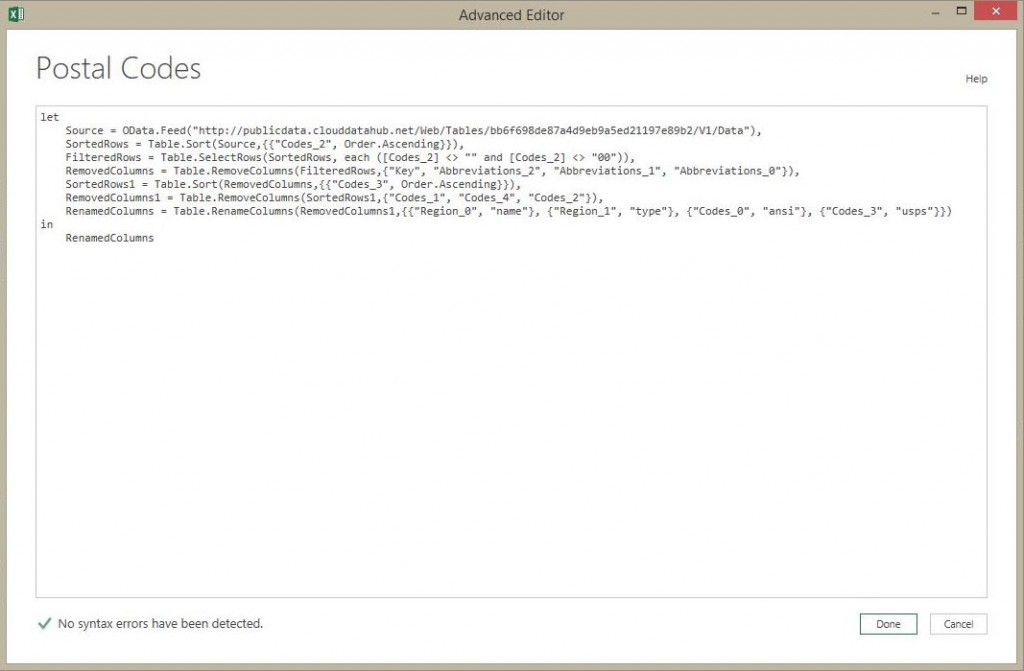

Just like many tools, the Power Query is built upon a language. Clicking the advanced editor brings up the Power Query formula that matches the saved steps.

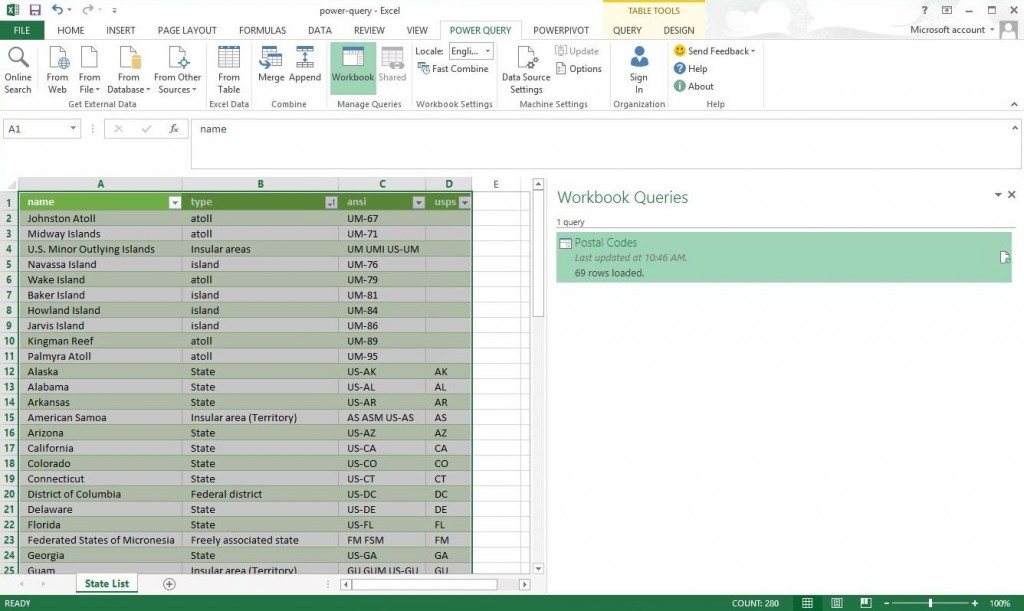

At the end of the process, we want the NAME, TYPE, ANSI and USPS columns for each state. The workbook query should be named “Postal Codes” and the worksheet should be named “State List”. Please note, we have eleven island like locations in the data for a total of 69 rows.

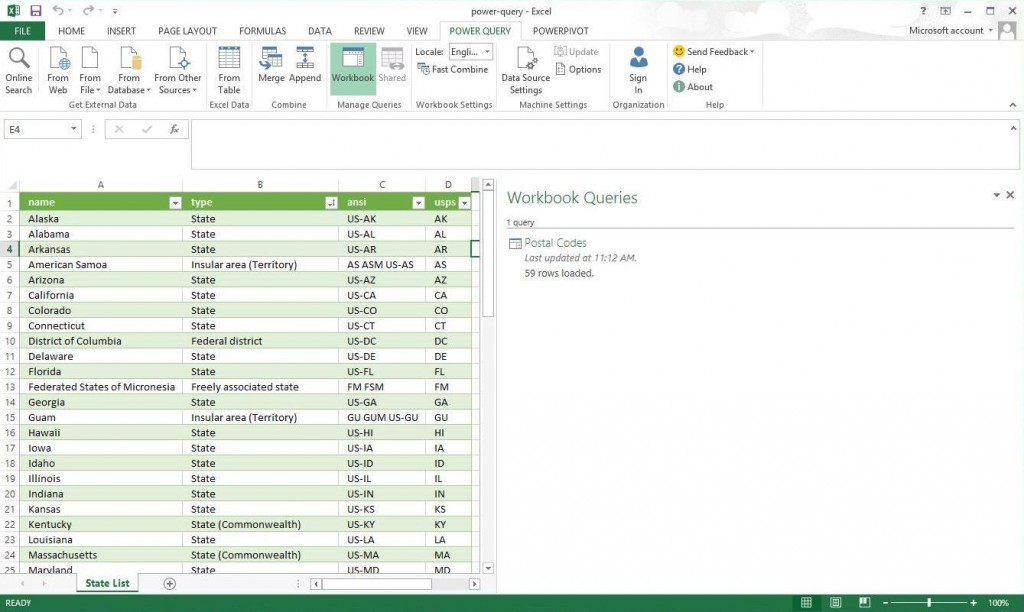

We can edit the saved query and filter out these rows. The resulting data set has 59 rows.

In summary, the Power Query tool allows a novice to put together a bunch of transformations (steps) into a package called a workbook query. These steps can be replayed to manually load data on a whim. The destination location of the information, selected by the user, can be either a worksheet or the xvelocity data model.

With the demand of business intelligence rising and the number of students graduating with a Computer Science degree declining, I can see the tool become popular for departments that need to get things done with a limited amount of help from IT.

In upcoming articles, I will be going over each section of the ribbon. Next time, I will talk about why Power Query is very flexible given the number of import options.