The BCP command line utility is the Cadillac of ETL programs for text based data files. It is REALLY FAST. It can perform both imports and exports and it can generate format files from existing objects.

Today, I am going implement the same business algorithms I did earlier (Part 2) using the BCP program instead of BULK INSERT. We will be working again with the Boy Scouts of America (BSA) hypothetical database. I will be using the xp_cmdshell to execute BCP from a query window inside of SQL Server Management Studio (SSMS).

I want to stress that the following steps should be done before, during, and after a major data load.

| TIMING | TSQL COMMAND | BUSINESS RULE |

| BEFORE | BACKUP DATABASE | Backup database before changes. |

| BEFORE | ALTER DATABASE | Set recovery model to bulk logged. |

| DURING | BCP | Execute ETL processes. |

| DURING | BACKUP LOG | Keep log growth to minimum. |

| AFTER | ALTER DATABASE | Set recovery model to full. |

| AFTER | BACKUP DATABASE | Backup database after changes. |

The BSA database has a STAGING schema for loading external data. The BCP utility in its simplest form must have the source data file match the number of columns in the target table. Therefore, we need to re-create the table to have just two fields.

There are many arguements that can be used to change how the statement executes. Here are some used that are used in the examples below.

| BCP SWITCH | ACTION |

| -F | first row starts at row x. |

| -L | last row ends at row y. |

| -t | field terminator is defined as . |

| -r | row terminator is defined as . |

| -T | use a trusted connection. |

| -m | max errors to allow. |

| -c | perform operation using char data type. |

| -E | use identity values in file. |

| -f | use the specified format file. |

| -b | z rows per batch before commit. |

| -h | specify advance hints to be use. |

The first example re-creates the table and imports the data.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 |

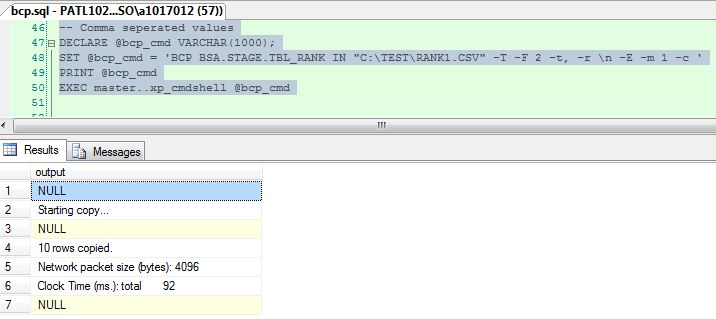

<span style="color: #008000;font-size:small;">-- Select BSA database USE BSA; GO -- Remove the table DROP TABLE [STAGE].[TBL_RANK]; GO -- Table w/o default fields CREATE TABLE [STAGE].[TBL_RANK] ( [RANK_ID] [int] IDENTITY(1,1) NOT NULL, [RANK_DESC] [varchar](50) NOT NULL, CONSTRAINT [PK_TBL_RANK] PRIMARY KEY CLUSTERED ([RANK_ID] ASC) ) GO -- BCP - Import Comma Seperated Value File DECLARE @bcp_cmd VARCHAR(1000); SET @bcp_cmd = 'BCP BSA.STAGE.TBL_RANK IN "C:\TEST\RANK1.CSV" -T -F 2 -t, -r \n -E -m 1 -c ' PRINT @bcp_cmd EXEC master..xp_cmdshell @bcp_cmd GO </span> |

I am going to make the problem a little more difficult by adding the three fields that are defaults. How do we now import a file that has less columns than the table?

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

<span style="color: #008000;font-size:small;">-- Add orginal fields ALTER TABLE [STAGE].[TBL_RANK] ADD [ROW_GUID] [uniqueidentifier] ROWGUIDCOL NULL, [MODIFIED_DTE] [datetime] NULL, [MODIFIED_NM] [varchar](20) NULL; GO -- Add orginal constraints ALTER TABLE [STAGE].[TBL_RANK] ADD CONSTRAINT [DF_TR_ROW_GUID] DEFAULT (newsequentialid()) FOR [ROW_GUID] GO ALTER TABLE [STAGE].[TBL_RANK] ADD CONSTRAINT [DF_TR_MODIFIED_DTE] DEFAULT (getdate()) FOR [MODIFIED_DTE] GO ALTER TABLE [STAGE].[TBL_RANK] ADD CONSTRAINT [DF_TR_MODIFIED_NM] DEFAULT ('BSA - SYSTEM') FOR [MODIFIED_NM] GO </span> |

That is where format files come in handy. We are going to use the BCP utility to create a format file from the table definition. From there, I am going to modify it by removing unwanted source rows and defining target fields. Run the following from a DOS command shell. All files used in the examples can be found at the end of the article.

|

1 2 3 4 5 6 7 8 |

<span style="color: #008000;font-size:small;">rem rem Run from cmd shell, create non xml format file, copy to final target and edit. rem bcp BSA.RECENT.TBL_RANK format nul -T -n -f rank2-all.fmt copy rank2-all.fmt rank2.fmt </span> |

Some arguements of interest are used in this example. The FIRSTROW and LASTROW are used to select a subset of the source data file. The BATCHSIZE allows the database engine to commit changes to disk. This is really important when the number of records increases to free up resources. Last but not least, the FORMATFILE allows our custom file definition to be used.

|

1 2 3 4 5 6 7 8 9 10 11 |

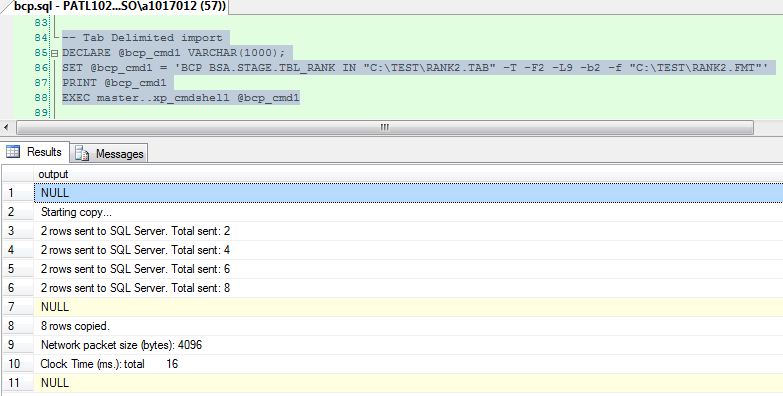

<span style="color: #008000;font-size:small;">-- Remove data from table TRUNCATE TABLE STAGE.TBL_RANK; GO -- BCP - Import Tab Delimited File DECLARE @bcp_cmd1 VARCHAR(1000); SET @bcp_cmd1 = 'BCP BSA.STAGE.TBL_RANK IN "C:\TEST\RANK2.TAB" -T -F2 -L9 -b2 -f "C:\TEST\RANK2.FMT"' PRINT @bcp_cmd1 EXEC master..xp_cmdshell @bcp_cmd1 GO </span> |

The last arguement that I want to introduce today allows triggers to be executed when BCP is executed. This is an easy way to move data from one table to another or from STAGE to RECENT schemas. The snipet below adds the trigger to the staging table and imports the data.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 |

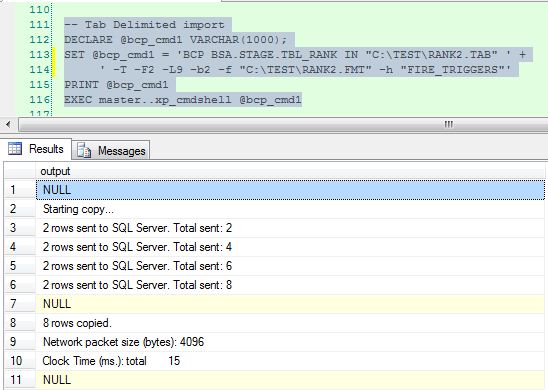

<span style="color: #008000;font-size:small;">-- Remove data from table TRUNCATE TABLE STAGE.TBL_RANK; GO -- Remove data from table TRUNCATE TABLE RECENT.TBL_RANK; GO -- Add trigger to staging table CREATE TRIGGER TRG_STAGE_2_RECENT ON [STAGE].[TBL_RANK] FOR INSERT AS INSERT INTO [RECENT].[TBL_RANK] (RANK_DESC) SELECT i.RANK_DESC FROM inserted i GO -- BCP - Import Data with triggers DECLARE @bcp_cmd1 VARCHAR(1000); SET @bcp_cmd1 = 'BCP BSA.STAGE.TBL_RANK IN "C:\TEST\RANK2.TAB" -T -F2 -L9 -b2 -f "C:\TEST\RANK2.FMT" -h "FIRE_TRIGGERS"' PRINT @bcp_cmd1 EXEC master..xp_cmdshell @bcp_cmd1 </span> |

Importing data by using the BCP command line utility is the best choice for moving large amounts of data. There are many options that can be specified that change the behavior of the program. A format file can be used to skip columns or define additional mappings.

Many ETL programs such as IWAY Data Migrator use a graphical interface to draw process flows. But under the covers, the core engine uses BCP or SQL Loader, a data file and format file to get the job done quickly.

The BCP utility is a great choice for creating ETL processes. I will be exploring using the utility next time to export data.

Files Used In Examples

| FILE NAME | PURPOSE |

| rank1.csv | Scout Rank Data (CSV) |

| rank2.tab | Scout Rank Data (TAB) |

| rank2.fmt | Modified format file |

| rank2-all.fmt | BCP format file based on table |