A Microsoft SQL Server database is composed of at least two operating system files: a primary data file (*.mdf) and a transaction log file (*.ldf). There might even be several secondary data files (*.ndf) if you have a Crafty database administrator (DBA) on staff. Today, we are going to talk about how these files grow and how they can be monitored.

A Microsoft SQL Server database is composed of at least two operating system files: a primary data file (*.mdf) and a transaction log file (*.ldf). There might even be several secondary data files (*.ndf) if you have a Crafty database administrator (DBA) on staff. Today, we are going to talk about how these files grow and how they can be monitored.

The strength of Microsoft SQL Server is the ease in which an accidental DBA can setup a database system in mere minutes. This same feature can be it’s downfall or Achilles heel. The default settings of a new database are set to 1 MB auto growth and unlimited max size on the data files and 10% auto growth and 2 GB max size on the log files.

These are non optimal settings will cause disk fragmentation and possible large number of virtual log files (VLFs). I suggest you change them once a new database is created. Growing files is a costly operation and should be avoided if it is critical to have quick database response times.

Greg Larson has written an excellent article on the subject. There is TSQL code to determine which databases still have the default settings. Also, there is TSQL code to parse the default trace file to find out when the last growth occurred and how costly it was. This is really cool code!

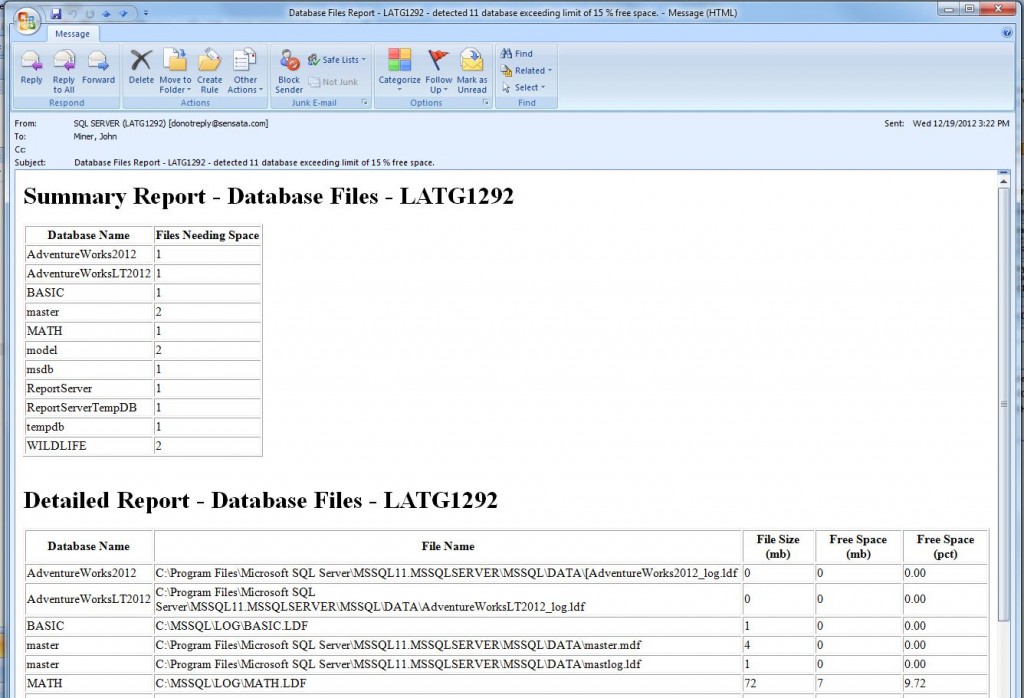

My goal is to present the database administrator (DBA) at a small company on a limited budget a stored procedure that can detect the amount of free space and email you files that exceed a threshold.

For instance, tell me all the database files that do not have 15% free space so that I can grow them overnight when the load on the server is lower. The auto growth setting should be your last defense, not your first one.

I start off all my coding efforts using pseudo code. This makes sure I have a plan of attack even before I type a single stroke.

|

1 2 3 4 5 6 7 8 9 10 11 |

1 - User parameters A - What action to perform (print / email / monitor)? B - Min free space to have on hand. C - Email distribution list. D - Optional email profile name. 2 - Get a list of all database files. 3 - Capture info that might be of interest. 4 - Database exceeds min free space, mark row as Y. 5 - Action = 'print', show data on output screen. 6 - Action <> 'print' and count(rows marked Y) > 0, email db files html report. 7 - Action = 'monitor', save raw data to 1 yr history table. |

Here are some interesting things to notice. I built upon the script that Tim Ford created. I chose to use a calculated field inside the table variable to figure out the free space. It makes the code a little more cleaner. Error checking has been added so that any unplanned errors will be detected.

I did not check that database mail is installed or a valid default email profile is setup. This is your responsibility to make sure those requirements are meet.

Last but not least, this is a complete stored procedure that you can schedule on each SQL Server instance. Call the stored procedure with the monitoring mode option to retain one years worth of history.

I suggest running it once a week to keep an eye on the size of your database files. You can write reports against this table to plot size trending as well as percentage of change between two time periods.

Enclosed is the finished stored procedure for your usage.

Below is a sample call to the procedure. I am using the default email profile.

|

1 2 3 4 5 |

-- If less than 15% free space, send me an email EXEC [msdb].[dbo].[usp_monitor_dbsize] @var_option = 'monitor', @var_min_free_pct = 15, @var_email_list = 'jminer@sensata.com'; |

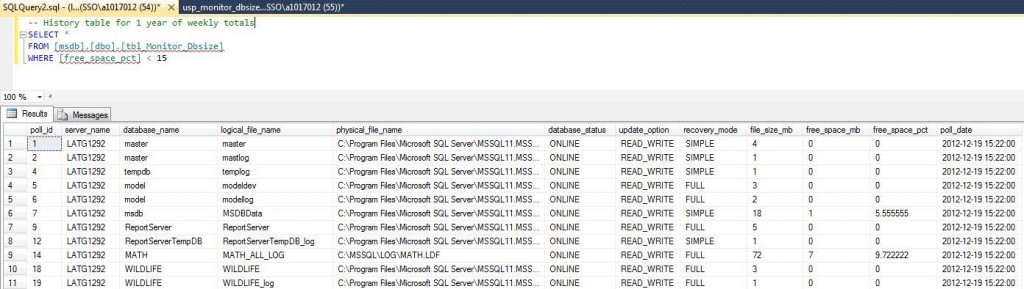

A sample select from history table with the results displayed in the SQL Server Management Studio (SSMS) output window is shown below.

|

1 2 3 4 |

-- History table, show files with less than 15% free space SELECT * FROM [msdb].[dbo].[tbl_Monitor_Dbsize] WHERE [free_space_pct] < 15 |

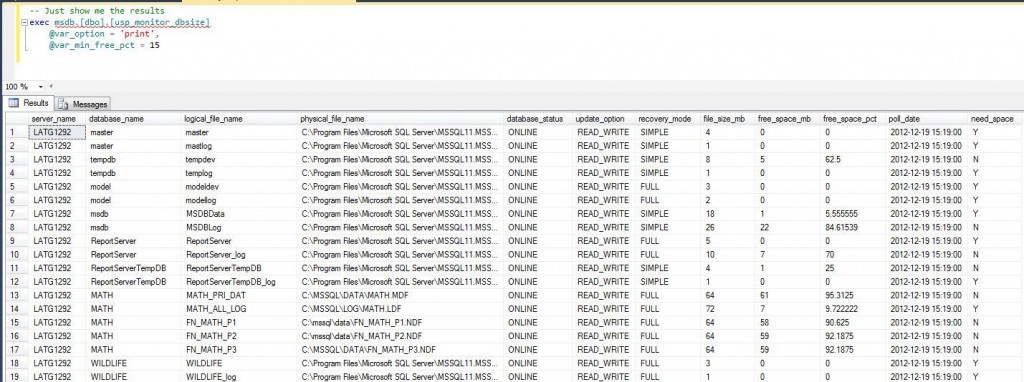

This sample call to display the results in SSMS output window. Please note, this will return all database files with the ones exceeded the threshold marked with a ‘Y’.

|

1 2 3 4 |

-- Just show me the results exec msdb.[dbo].[usp_monitor_dbsize] @var_option = 'print', @var_min_free_pct = 15 |

I want to recap this blog with the following message, “Do not depend on auto growth to size your databases if you want maximum performance”. Growing databases should be reserved as an off hours activity. The enclosed stored procedure can be used to track database file size over time.

Look at Greg’s script that identifies databases that have the default growth settings. Please change these setting to avoid disk fragmentation and virtual log file explosion.

I hope you enjoy the enclosed code. If you find any issues with the script, please email me.