I recently worked on a project I which I redesigned sales data warehouse as a STAR schema, using daily file partitions, with a automatic sliding window, and applying data compression at the page level. I ended up reducing a 5 terabyte database to less than 750 GB. I will be writing several articles on the lessons that I learned during the process.

Today, I want to talk about how to generate a hash key by using two built in SQL Server functions. A hash function is any algorithm that maps large data sets of variable length keys to smaller data set of a fixed length key.

One of the business requirements in the data warehouse was to have 15 different reporting levels. Each unique combination represents one reporting level. The maximum size of an index in SQL Server is 16 columns and 900 bytes. Adding an index is not feasible since the combined size of all columns can easily exceed this value.

The prior BI developer was joining on all 15 columns in the SSIS package. The execution of the SSIS package results in a full table scan when joining the source data to the reporting level dimension in the attempt to generate a surrogate key. This can be a major performance issue on large tables. How do we speed up the join?

The solution to this join problem is to use a hash key. This should allow the query optimizer to choose a Index Seek for the join. Basically, we apply the hash function to the 15 columns to come up with a unique number or binary string. This hash key will be indexed and used as the natural key in the reporting levels dimension table.

Expanding the BASIC TRAINING database, I am going to use the following T-SQL snippet to create a reporting levels dimension table. I am going to create the hash key as a computed column using the CHECKSUM() function.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 |

-- Use the correct database USE [BASIC]; GO -- Delete existing table IF OBJECT_ID(N'[TRAINING].[DIM_REPORTING_LEVELS]') > 0 DROP TABLE [TRAINING].[DIM_REPORTING_LEVELS] GO -- Create new table CREATE TABLE [TRAINING].[DIM_REPORTING_LEVELS] ( RPTLVL_KEY INT IDENTITY(1,1) NOT NULL CONSTRAINT PK_DIM_REPORTING_LEVELS PRIMARY KEY, RPTLVL_PRODUCT_ID VARCHAR(240) NOT NULL, RPTLVL_CURRENCY VARCHAR(240) NOT NULL, RPTLVL_ORGANIZATION VARCHAR(240) NOT NULL, RPTLVL_PRODUCT_FAMILY VARCHAR(240) NOT NULL ); GO -- Record one INSERT INTO [TRAINING].[DIM_REPORTING_LEVELS] ( RPTLVL_PRODUCT_ID, RPTLVL_CURRENCY, RPTLVL_ORGANIZATION, RPTLVL_PRODUCT_FAMILY ) VALUES ('34HM118-1', 'USD', 'FTW', '34HM'); -- Record two INSERT INTO [TRAINING].[DIM_REPORTING_LEVELS] ( RPTLVL_PRODUCT_ID, RPTLVL_CURRENCY, RPTLVL_ORGANIZATION, RPTLVL_PRODUCT_FAMILY ) VALUES ('35HM118-1', 'USD', 'FTW', '35HM'); -- Add a hash key using checksum() ALTER TABLE [TRAINING].[DIM_REPORTING_LEVELS] ADD RPTLVL_HASH_KEY AS CHECKSUM(RPTLVL_PRODUCT_ID, RPTLVL_CURRENCY, RPTLVL_ORGANIZATION, RPTLVL_PRODUCT_FAMILY); |

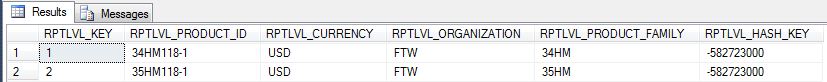

If you read books on line closely, you will note that the CHECKSUM() function does not guarantee uniqueness. The function takes a bunch of columns as an input and turns out one integer as an output.

It is using the MD5 algorithm. However, the size of the output (4 bytes) limits the number of possible outputs. I initially used this function in the data warehouse and found over 300 duplicates

in 160,000 levels. The above rows generate the same hash key.

|

1 2 |

-- Show duplicate data SELECT * FROM [TRAINING].[DIM_REPORTING_LEVELS]; |

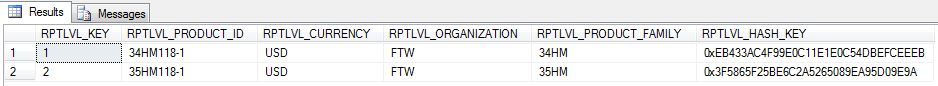

On the other hand, the HASHBYTES() function using MD5 is more unique since it generates a 16 byte hex output. The function can generate hash keys using 7 different alogrithms with output

ranging in size from 16 to 64 bytes.

The code below drops the hask key column and recomputes it using the HASHBYTES() function. It takes an input of characters or bytes up to 8K in size. I suggest making sure the columns are not null and concatenate all columns into one combination.

|

1 2 3 4 5 6 7 8 9 10 11 12 |

-- Drop the column ALTER TABLE [TRAINING].[DIM_REPORTING_LEVELS] DROP COLUMN RPTLVL_HASH_KEY; -- Add a hash key using hashbytes() ALTER TABLE [TRAINING].[DIM_REPORTING_LEVELS] ADD RPTLVL_HASH_KEY AS HASHBYTES('MD5', RPTLVL_PRODUCT_ID + RPTLVL_CURRENCY + RPTLVL_ORGANIZATION + RPTLVL_PRODUCT_FAMILY); -- Index the column for speed CREATE INDEX IX_DIM_REPORTING_LEVELS ON [TRAINING].[DIM_REPORTING_LEVELS] (RPTLVL_HASH_KEY); -- Show unique data SELECT * FROM [TRAINING].[DIM_REPORTING_LEVELS]; |

In summary, a hash function can be used when multiple columns have to be compressed into one unique column. While the CHECKSUM() function is available in SQL Server, I would avoid it since it is not guaranteed to be unique.

A better choice is to design the computed column with the HASHBYTES() function.

Thank you for this tip. The HASKBYTES() function is a good way to compare records in two tables, for needed updates. Just compare old/stored hashbyte to possibly new hashbyte.

Hello,

You need to add a “splitter” to your hashbyte calculation. for example -> if you have two columns for example age and amount:

17 and 5000

1 and 7500

this will result both in 175000 if you concatenate both columns. Thus your checksum hash is the same :-)

Regards Joey.

Sorry, forget a zero -> 1 and 7500 must be 1 and 75000 :) but i think my point is clear

why are null columns an issue with hashbytes?

Any combined with null results in null.