The transaction log file records all transactions and the database modifications made by each connection. Heavy application processing against the database can make the transaction log grow quickly. Each physical log file is divided into smaller logical units called virtual log files (VLFs).

The transaction log file records all transactions and the database modifications made by each connection. Heavy application processing against the database can make the transaction log grow quickly. Each physical log file is divided into smaller logical units called virtual log files (VLFs).

If there is no auto-growth setting on the log file, the database will stop working, be marked as suspect, when you run out of space. Therefore, it is important to monitor database file space, both data and log, so this does not happen. I will present a solution to this problem later tonight.

Right now, we are going to be concerned with VLF fragmentation which can slow a system down. Several people such as Brad McGhee, Kalen Delaney, Kimberly Tripp, Greg Robidoux and David Levy have written excellent articles on this topic.

My goal is to present the database administrator (DBA) at a small company on a limited budget a stored procedure that can detect VLFs and optionally email you the results.

I start off all my coding efforts using pseudo code. This makes sure I have a plan of attack even before I type a single stroke.

|

1 2 3 4 5 6 7 8 9 10 11 |

1 - User parameters A - What action to perform (print / email / monitor)? B - Max VLF's allowed. C - Email distribution list. D - Optional email profile name. 2 - Get a list of databases that are on-line. 3 - For each database, grab the log information. 4 - Database exceeds the max VLF's, mark row as Y. 5 - Action = 'print', show data on output screen. 6 - Action <> 'print' and count(rows marked Y) > 0, email VLF html report. 7 - Action = 'monitor', save raw data to 1 yr history table. |

Here are some interesting things to notice. I built upon the script that David Levy created. His script had a bug in which it errors out when a database is off-line. Also, it does not work for SQL Server 2012 since the DBCC LOGINFO undocumented command changed the results of it’s output.

I chose to use temporary variables instead of tables since they go away without dropping. Error checking has been added so that any unplanned errors will be detected.

I did not check that database mail is installed or a valid default email profile is setup. This is your responsibility to make sure those requirements are meet.

Last but not least, this is a complete stored procedure that you can schedule on each SQL Server instance. Call the stored procedure with the monitoring mode option to retain one years worth of history. I suggest running it once a week to keep an eye on VLF’s.

Enclosed is the finished stored procedure for your usage.

A sample call to the procedure is the following. I am using the default email profile.

|

1 2 3 4 5 |

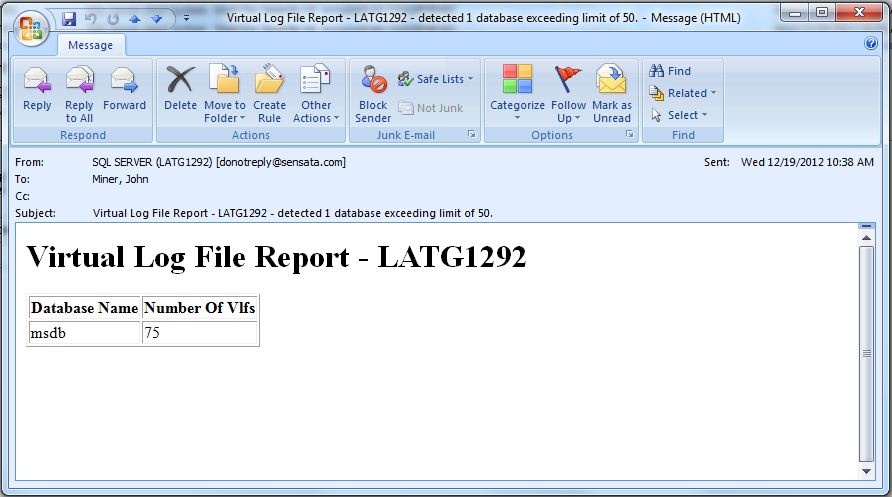

-- If > 50 VLF's, send me an email. Save VLFs data to history table. EXEC [msdb].[dbo].[usp_monitor_vlfs] @var_option = 'monitor', @var_max_vlfs = 50, @var_email_list = 'john@craftydba.com'; |

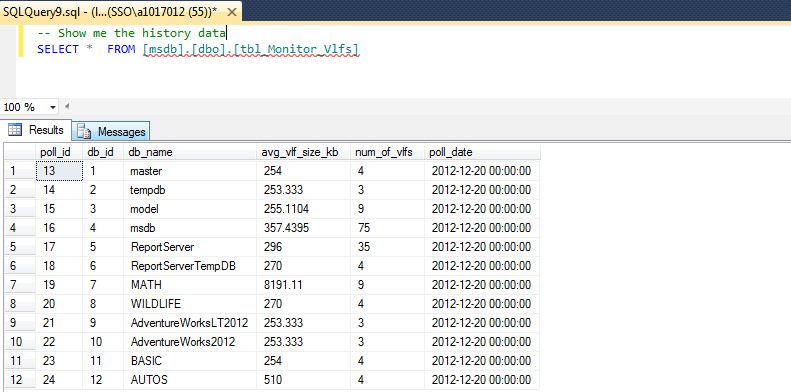

A sample select from history table with the results displayed in the SQL Server Management Studio (SSMS) output window.

|

1 2 |

-- Show me the history data SELECT * FROM [msdb].[dbo].[tbl_Monitor_Vlfs] |

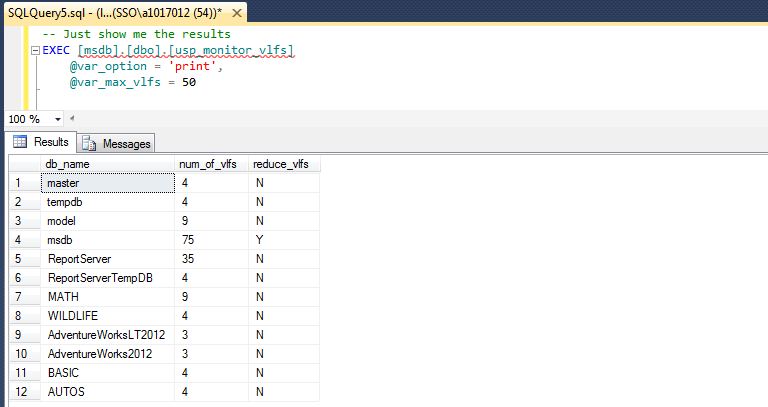

This is a sample call to display the results to a SSMS output window. It shows all databases, their virtual log file settings, and which ones exceeded the threshold.

|

1 2 3 4 |

-- Just show me the results EXEC [msdb].[dbo].[usp_monitor_vlfs] @var_option = 'print', @var_max_vlfs = 50 |

In summary, VLF’s can really slow down a system since data has to be written ahead to the log file before writing to the data file.

By making sure that you have a correctly sized log file that does not grow between transaction log backups, this problem can be avoided. Any data or log file growth is expensive in terms of processing and blocking. Growing the initial transaction log file ahead of time in larger blocks allows for a uniform block size.

See Brad’s slides for more details. I had the privilege to see this presentation at Pass Summit 2011. I hope you enjoy the enclosed code. If you find any issues with the script, please email me.